Observability, Reimagined for the AI Era

Observability 3.0 brings AI-native, unified infrastructure, application, and LLM observability together in a single platform.

Move from reactive firefighting to proactive, autonomous operations. 140× lower storage costs. Zero database management.

Legacy stacks weren't built for the AI era

Legacy observability solutions were designed for static infrastructure. They cannot manage the telemetry volume of modern AI workloads, forcing customers onto data diets that kill the context needed for root-cause analysis.

Siloed Tooling

Separate platforms for LLM observability, incident triage, and front-end monitoring, each with its own instrumentation, UI, and ops overhead.

Reactive, not proactive

Incidents are discovered by users before engineers, because legacy tools alerting only triggers when something is broken.

Escalating costs

High-volume LLM telemetry forces costs to scale exponentially. This often drives organizations toward aggressive sampling that compromises data fidelity.

One platform.

All of your telemetry. Autonomous intelligence.

OpenObserve replaces your entire observability stack with a single platform handling logs, metrics, traces, real user monitoring, and LLM telemetry in one interface. No separate databases, no data diets, no stitching tools together.

Three interconnected AI capabilities

Each capability is powerful on its own. Together they create a self-healing, autonomous observability loop.

Anomaly detection

Early warning signals before an incident occurs. Proactive alerts in your existing workflow so your team can respond, not react.

- ✓Statistical baseline modeling

- ✓Correlated signal detection

- ✓Alert fatigue reduction

- ✓Works across all telemetry types

AI SRE

AutonomousAn autonomous layer that analyzes telemetry context, identifies root cause, and recommends or takes corrective action automatically.\n\n

- ✓Root cause analysis at machine speed

- ✓Context-aware remediation

- ✓Incident summarization

- ✓Integrates with existing runbooks

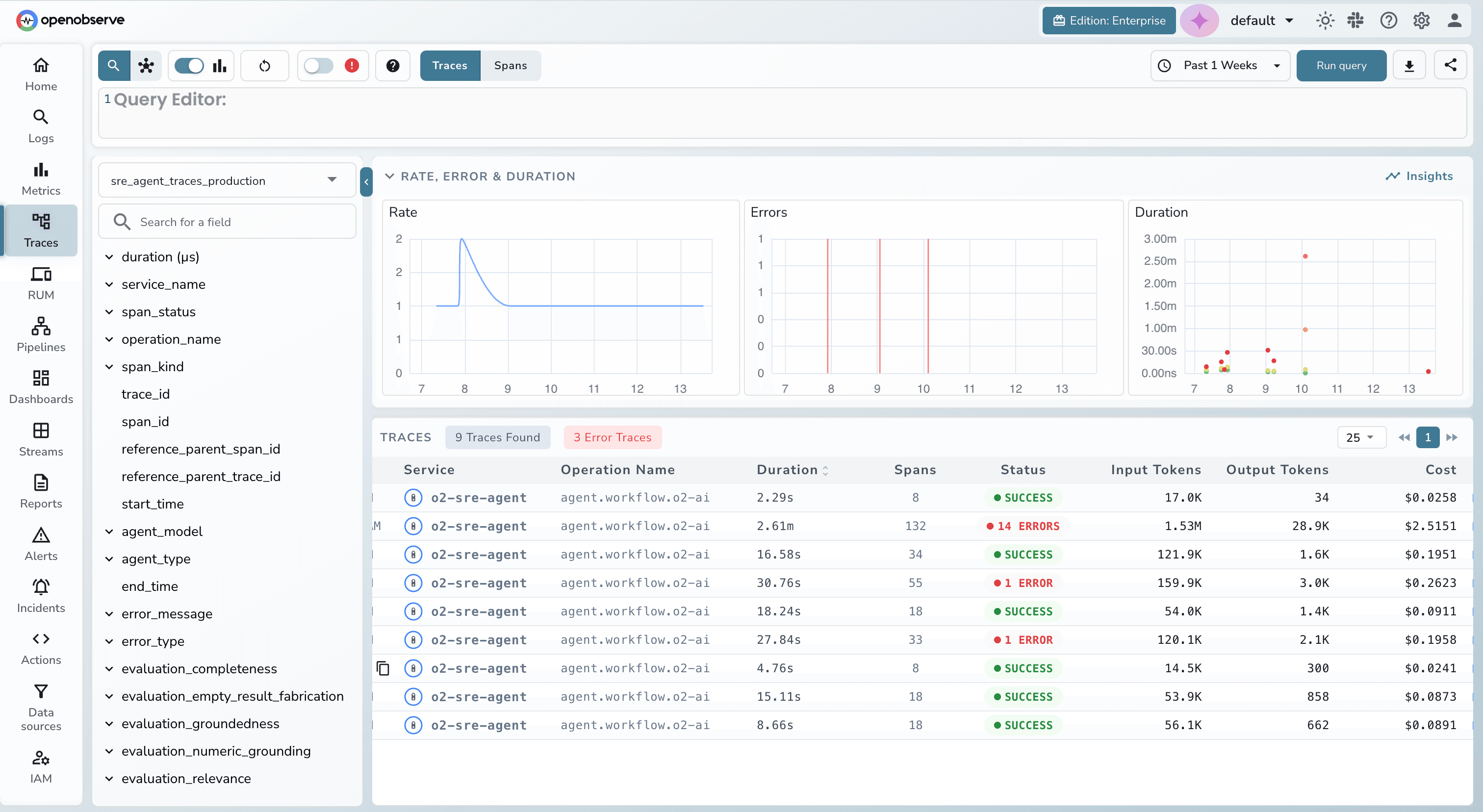

LLM observability

Extend OpenObserve's telemetry pipeline to cover prompt monitoring, eval tracking, and generative AI performance.

- ✓Prompt & response monitoring

- ✓Token usage and cost tracking

- ✓Eval and quality tracking

- ✓Model performance benchmarking

The Tools You Need for Observability 3.0

Your roadmap for implementing and scaling modern observability.

Universal Integrations

→ The whole pictureNext-Gen Observability

→ Beyond the basicsPredictable Pricing

→ No hidden feesBuilt for Scale

→ High performanceRadical Savings

→ Do the mathFAQs

Stop firefighting. Start shipping.

Move from reactive incident response to autonomous operations with Observability 3.0.

Free to start. No credit card required.